AI/ML communities across NOAA

Artificial Intelligence (AI) and Machine Learning (ML) efforts are underway across NOAA. As NCAI develops, this will be a space for those Communities of Practice to provide a vehicle for discovery and networking.

Featured NOAA AI Research

Each month the NCAI Newsletter features AI-related NOAA research from our community members. The rotator below highlights research from the current and previous newsletters. Subscribe to the NCAI Newsletter offsite link.

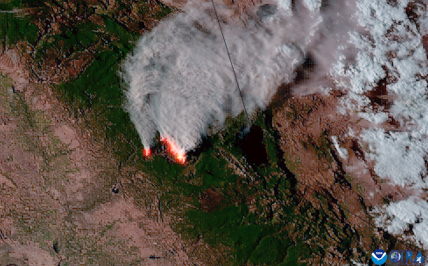

Heightened health risks due to wildfire pollutants: Results from an AI modeling study

The Climate Program Office’s Atmospheric Chemistry, Carbon Cycle and Climate (AC4) Program supported new research examining wildfire impacts on air quality and public health in the continental USA from 2000 to 2020. This work is jointly funded by OAR and NESDIS and executed through the NOAA Atmospheric Composition from Space (NACS) team. Supported researchers, Jun Wang from the University of Iowa and Susan Anenberg from George Washington University, collaborated with NOAA scientist Shobha Kondragunta and a team from NASA, and four other academic institutions to examine increasing wildfires and associated health risks in the western U.S. This work, published in The Lancet Planetary Health, contributes to an AC4 initiative to understand long-term trends in atmospheric composition and ultimately help plan for and respond to impacts.

The researchers estimated fine particles (PM2.5) and highly toxic black carbon pollutants using a deep learning model, or a method of artificial intelligence that teaches computers to process data in a way inspired by the human brain. After overall improvements in air quality and a decrease in premature deaths related to PM2.5 and black carbon until 2010, the western U.S. experienced a concerning reversal. Since 2010, there has been a 55% increase in PM2.5, an 86% increase in black carbon, and 670 more premature deaths annually in this region. The study attributes this shift to the escalating frequency and intensity of wildfires. Notably, 100% of populated areas in the USA experienced PM2.5 pollution exceeding guidelines on at least one occasion, with recent wildfires greatly exacerbating exposure risks in western regions. These findings stress the importance of effective wildfire management policies alongside climate mitigation efforts to safeguard air quality and public health.

Researchers Develop Drone-based System to Detect Marine Debris, Expedite Clean Up

NOAA’s National Centers for Coastal Ocean Science (NCCOS), Oregon State University, and their partners are developing a drone-based, machine-learning system to detect and identify marine debris along the coast. In December 2021, the researchers used beaches near Corpus Christi, Texas, to evaluate devices for the system and refine detection methods.

Marine debris, also known as marine litter, is a global problem that threatens the environment, navigation safety, coastal economies, and, potentially, human health. Detecting and identifying debris quickly and accurately is key to cleanup and response actions that can prevent these impacts. Unmanned Aerial Systems, or drones, offer this capability.

Polarized light reflected from human-made objects often differs from natural objects, such as vegetation, soil, and rocks. Installing a polarimetric camera on a drone could improve debris detection from the air. The researchers tested such a camera, both on the ground, and, with the help of the U.S. Coast Guard, from a helicopter.

The helicopter flight allowed the team to mimic the height at which the drone system would be flown and simulate what would happen if the drone used a polarimetric camera. Next, the team trained a machine-learning computer program to find and classify the debris in the imagery collected.

Once fully operational, data collected by the drone-based, machine-learning system will be used to make maps that show where marine debris is concentrated along the coast to guide rapid response and removal efforts.

The researchers will provide NOAA Marine Debris Program staff with training in the use of the new system, along with a standard operating procedures manual. The project is a collaboration among NCCOS, NOAA’s Marine Debris Program, Oregon State University, ORBTL.AI offsite link, and Genwest Systems, Inc.

NOAA uses artificial intelligence to translate forecasts, warnings into Spanish and Chinese

NOAA’s National Weather Service (NWS) has provided manual translations of weather forecasts and warnings in Spanish for the past 30 years, but now the agency has a new tool to be more accurate, efficient and equitable.

Through a series of pilot projects over the past few years, NWS forecasters have been training artificial intelligence (AI) software for weather, water and climate terminology in Spanish and Simplified Chinese, the most common languages in the United States after English. NWS will add Samoan and Vietnamese next, and more languages in the future.

This effort was supported by the House Appropriations Committee in NOAA’s fiscal year 2023 Congressional budget.

"Getting timely weather alerts ahead of a dangerous storm in multiple languages helps ensure that potentially lifesaving information is available to everyone,” said U.S. Rep. Grace Meng (D-NY), a member of the House Appropriations Subcommittee on Commerce, Justice, and Science. “By capitalizing on the advancements of AI technology, we will be able to provide these alerts in even more languages in the near future. I want to applaud the National Weather Service and Lilt for working with me to meet people where they are. By helping to be more inclusive and further increase safety in our many diverse communities, we can protect more people from severe weather storms in the United States.”

“This language translation project will improve our service equity to traditionally underserved and vulnerable populations that have limited English proficiency,” said Ken Graham, director of NOAA’s National Weather Service. “By providing weather forecasts and warnings in multiple languages, NWS will improve community and individual readiness and resilience as climate change drives more extreme weather events.”

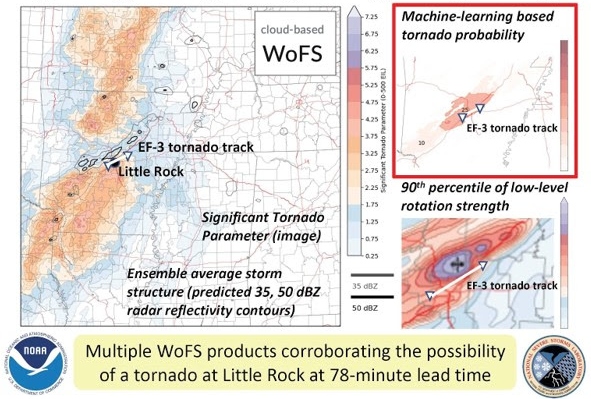

The Warn-on-Forecast System: A cutting-edge storm-scale NWP system made even better by AI

The NOAA National Severe Storms Laboratory (NSSL) and Cooperative Institute for Severe and High-Impact Weather Research and Operations (CIWRO) are leading the development of the Warn-on-Forecast System (WoFS):

a cloud-based, rapidly-updating, convection-allowing ensemble designed to support watch-to-warning (0–6 hr) operations for tornadoes, flash floods, and other high-impact weather. The WoFS is scheduled for operationalization at the National Weather Service (NWS) Unified Forecast System around 2027, but is already routinely used by many NWS Weather Forecast Offices, the Storm Prediction Center, and the Weather Prediction Center.

A major strength of the WoFS is its use of machine learning (ML) to generate probabilistic predictions of severe thunderstorm hazards (tornadoes, large hail, damaging wind). Forecasters have frequently found these ML products to be helpful during severe weather operations. Additional ML models are in development for predicting not only severe weather, but also heavy rainfall and regions where WoFS forecasts of storms will be unusually high- or low-quality. Inspired by the recent success of emerging global data-driven AI-NWP models, the NSSL WoFS team has begun exploring the concept of a data-driven WoFS, where forecasts are generated by deep learning models trained on archived WoFS output.

In February, NOAA entities involved with the WoFS received a 2023 DOC Gold Medal Award for “scientific and engineering excellence in developing a revolutionary prediction tool that provides short-term probabilistic thunderstorm guidance.” AI promises to play an increasingly vital role as NSSL and other agencies advance the frontiers of storm-scale prediction.